Quantum computing is one of the most powerful new technologies today. Unlike normal computers that work with only 0 or 1, quantum computers use special rules of physics to solve problems much faster. In previous years, like in 2024 and 2025, quantum computing moved from theory to real use.

Scientists showed real results, such as faster problem solving and better control of quantum systems. Companies like Google, Microsoft, and IBM made big progress with new quantum chips and useful experiments. Because of these advances, quantum computing is no longer just a future idea.

This quantum computing tutorial simply shows why it is important and why now is the right time to start learning it.

What is Quantum Computing?

Quantum computing is an exciting area of research that combines ideas from physics and computer science. It uses the principles of quantum mechanics, which is the science that explains how very small particles, like atoms and photons, behave. By tapping into these unique properties, quantum computers can solve certain types of problems much faster than traditional computers.

This emerging technology has the potential to transform how we process, store, and manage information. In this quantum computing tutorial, we explore the basic concepts, working principles, and importance of quantum computing to help beginners understand this powerful and futuristic technology.

How It Works

Traditional computers work like a series of light switches, turning on or off to represent the numbers 0 and 1. In contrast, quantum computers use a more advanced approach with what are called qubits. These qubits can be thought of as switches that can be off (0), on (1), or both at the same time. This special ability, known as superposition, enables quantum computers to look at many different solutions at once. This makes them potentially much more powerful for solving complex problems.

Why Quantum Computing Matters

Before we move further into this quantum computing tutorial, it is important to understand what classical computers and quantum computers are. Both are types of machines designed to solve problems, but they work in very different ways. While classical computers process information using bits that represent either 0 or 1, quantum computers use the principles of quantum mechanics, allowing them to handle information in a completely new and powerful manner. While a classical computer can handle any task that a quantum computer can, it might take much longer to do so. For some specific challenges, quantum computers can be dramatically faster, sometimes millions of times more efficient.

Here are a few examples:

- Breaking Encryption: Imagine a super-powerful classical computer trying to crack an encryption code. It could take that computer 10,000 years to finish the job, while a quantum computer might do it in just a few minutes.

- Drug Discovery: Quantum computers are great at simulating how molecules interact with each other. This process can be incredibly complex, and classical computers struggle with it, but quantum computers can handle it much more easily, speeding up the discovery of new drugs.

- Optimisation: When it comes to figuring out the best way to manage complex logistics, like shipping goods around the world or planning routes, quantum computers can solve these complicated problems much quicker, potentially saving companies billions of dollars.

In short, while classical computers are powerful, there are specific situations where quantum computers can really shine and provide significant advantages, which is why understanding this difference is an important part of any quantum computing tutorial.

Historical Context

In 1998, a team of scientists, including Isaac Chuang, Neil Gershenfeld, and Mark Kubinec, built the first quantum computer, which had 2 basic units of information called qubits. Since then, the world of quantum computing has seen amazing advancements:

- In 2019, Google made headlines by claiming they achieved "quantum supremacy," meaning their quantum computer, named Sycamore, performed a task much faster than any classical computer could.

- By 2024, Google showcased its Willow chip, which was able to reduce errors in quantum calculations, addressing a significant challenge that had existed for three decades.

- In 2025, for the first time, a quantum computer was able to tackle real-life problems in molecular simulation more effectively than traditional computers.

- Also in 2025, several companies unveiled new quantum devices that surpassed 100 qubits, marking a major step forward in the development of quantum technology.

These milestones represent significant progress in the exciting field of quantum computing.

Quantum Computers vs Classical Computers: Key Differences

| Aspect | Classical Computer | Quantum Computer |

|---|---|---|

| Basic Unit | Bit (0 or 1) | Qubit (0, 1, or both) |

| Processing | Sequential | Parallel (superposition) |

| Speed for Specific Tasks | Slower | 10,000x+ faster |

| Temperature | Room temperature | Near absolute zero |

| Stability | Highly stable | Prone to decoherence |

| Error Rate | ~0.0001% | Still being reduced (historically ~1%) |

| Scalability | Easy to scale | Extremely challenging |

| Current Use | General purpose | Specialized problems |

When Quantum Computers Excel

Quantum computers are NOT faster at everything. They excel at:

✓ Optimization problems - Finding the best solution among trillions of possibilities

✓ Simulation - Modeling quantum systems and molecular behavior

✓ Database searching - Quantum algorithms like Grover's search

✓ Factoring - Breaking RSA encryption (Shor's algorithm)

✓ Machine learning - Quantum machine learning on high-dimensional data

Where Classical Computers Still Win

Classical computers remain superior for:

✓ Everyday computing tasks

✓ Web browsing and email

✓ Most business applications

✓ Current AI/Machine learning (though quantum may eventually accelerate this)

Core Principles of Quantum Computing

Understanding quantum computing requires grasping four fundamental quantum mechanical principles, which form the foundation of any quantum computing tutorial.

1. Superposition

Definition: Superposition means a qubit can exist in multiple states simultaneously until it is measured.

Example: While a classical bit is either 0 or 1, a qubit can be in a superposition of both 0 AND 1 at the same time, with different probabilities. This is often represented mathematically as:

|ψ⟩ = α|0⟩ + β|1⟩

Where α and β are probability amplitudes.

Why It Matters: Superposition allows quantum computers to explore many solution paths simultaneously. With just 300 qubits in superposition, a quantum computer can represent more states than there are atoms in the universe (2³⁰⁰ states).

Real-World Analogy: Imagine a coin spinning in the air; it's neither heads nor tails until it lands. A qubit is similar; it exists in a probabilistic state until measured.

2. Entanglement

Definition: Entanglement is the phenomenon where two or more qubits become correlated such that the state of one qubit instantly influences the state of another, regardless of distance.

Example: If two qubits are entangled, measuring one qubit will instantaneously determine the state of the other, even if they're spatially separated.

Why It Matters: Entanglement enables quantum computers to process information more efficiently than classical systems. Entangled qubits can perform coordinated operations that would require exponentially more steps on classical computers.

Bell States: The simplest example of entanglement is the Bell state, where two qubits are so interconnected that they always have opposite values:

|Φ⁺⟩ = (|00⟩ + |11⟩)/√2

Recent Breakthrough: In 2024, Microsoft and Quantinuum achieved the entanglement of 12 logical qubits, triple the previous record. This represents a critical milestone toward practical quantum computing.

3. Interference

Definition: Interference is a way to understand how quantum waves interact with each other. Imagine it like waves in a pond: when two waves come together in a way that makes a bigger wave, that’s called constructive interference. On the flip side, when they meet in a way that cancels each other out, that's called destructive interference.

Why is This Important? In the world of quantum computing, algorithms (which are like instructions for solving problems) are designed to use these interference effects. They ensure that the wrong answers get canceled out while the right answers get amplified, making it much easier for quantum computers to find solutions quickly and efficiently.

An Example - Quantum Echoes Algorithm: A great example is Google’s 2025 Quantum Echoes algorithm. This technology leverages constructive interference to enhance measurements of tiny molecular states, leading to incredibly precise results that haven't been possible before.

4. Decoherence

Definition: Decoherence happens when tiny units of information in quantum computers, called qubits, lose their special quantum traits. This can occur when they interact with things in their environment, like heat or electromagnetic signals.

Why is it Important? Decoherence is a major challenge for making quantum computers more powerful. When a qubit decoheres, it stops behaving like a quantum bit and starts acting like a regular bit, which makes errors more likely. This can hinder the performance of quantum computers we are trying to build.

The Challenge We Face: To help prevent decoherence, qubits need to be kept very cold, close to absolute zero – that’s around minus 273 degrees Celsius (or just above absolute zero in millikelvin). Yet, even under these conditions, the quantum states of qubits only last for very short periods of time, typically from microseconds to milliseconds, before decoherence sets in.

Current Solutions:

Researchers are working on several ways to tackle this issue:

- Error Correction Codes: These methods help protect the information stored in qubits by spreading it across several qubits. This way, if one qubit decays, the others can help recover the lost information.

- Google’s Willow Chip: Recently, this chip has been able to lower error rates to a crucial level that can help with error correction, marking a significant breakthrough in 2024.

- Topological Qubits: These newer types of qubits work by organizing information in patterns instead of relying on individual particles. This approach has been successfully showcased by research teams from Quantinuum, Harvard, and Caltech.

By addressing decoherence, scientists hope to unlock the true potential of quantum computing, leading to faster and more powerful computers in the future.

Qubits: The Building Blocks

A qubit, short for quantum bit, is the basic building block of quantum information. Unlike a regular bit, which can only be either a 0 or a 1, a qubit is more flexible. It can exist as a 0, a 1, or even a combination of both at the same time. This unique feature allows qubits to process information in ways that classical bits can't, opening up new possibilities in computing and technology.

Qubit States

Mathematical Representation (Dirac Notation):

- |0⟩ represents the state zero

- |1⟩ represents the state one

- |+⟩ represents equal superposition of 0 and 1

- |-⟩ represents another superposition with different phase

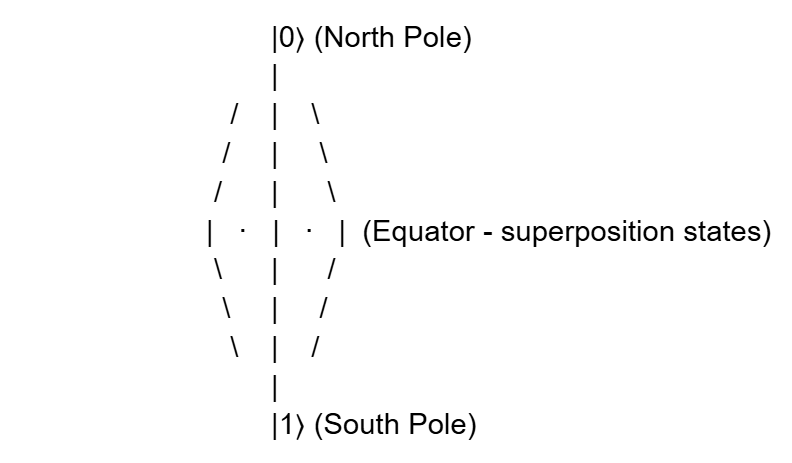

The Bloch Sphere

The Bloch sphere is a geometric representation of single-qubit states:

Key Points:

- The north pole represents |0⟩

- The south pole represents |1⟩

- The equator represents equal superpositions

- Any point on the sphere represents a valid quantum state

Physical Implementations of Qubits

Different physical systems can implement qubits:

1. Superconducting Qubits (Used by IBM, Google, Rigetti)

- Artificial atoms created from superconducting circuits

- Currently, the most developed technology

- Require cooling to near absolute zero

2. Trapped Ions (Used by Quantinuum, IonQ)

- Individual atoms trapped using electromagnetic fields

- Highly coherent (longer quantum state duration)

- Excellent for connecting qubits

3. Photonic Qubits (Used by Xanadu, QuiX)

- Information encoded in photons (light particles)

- Potentially room-temperature operation

- Challenges: photon loss and detection

4. Topological Qubits (Experimental - Microsoft focus)

- Encode information in topological properties

- Theoretically more resistant to decoherence

- Recent breakthrough: first "true topological qubit" demonstrated (2024)

5. Neutral Atoms (Used by QuEra, Atom Computing)

- Atoms held in optical traps

- Highly scalable potential

- Growing industry interest

Coherence Time: A Critical Metric

Coherence time refers to the duration a qubit can hold its quantum state before it starts to lose this information due to environmental factors. In this quantum computing tutorial, understanding coherence time is important because it directly affects how long quantum calculations can run reliably. Recently, there have been some exciting advancements in this area.

- Google's Willow chip has achieved a remarkable feat by doubling the time qubits can maintain their state compared to earlier systems.

- New quantum error-correcting codes have been developed that can fix errors more quickly than they happen, marking a significant breakthrough in 2024.

These improvements are crucial for making quantum computers more reliable and efficient.

Quantum Gates and Circuits

Quantum gates are like the building blocks for quantum computers, similar to how classical logic gates work in regular computers. In a beginner-friendly quantum computing tutorial, quantum gates are explained as operations used to process information stored in qubits, the basic units of quantum information. They are used to process information stored in qubits, the basic units of quantum information. A key aspect of quantum gates is that they have to maintain the unique properties of qubits, which are based on the principles of quantum mechanics. This means they need to perform their operations specially, ensuring that the information remains intact while it's being manipulated.

Common Single-Qubit Gates

1. Hadamard Gate (H)

- Creates equal superposition

- Transforms |0⟩ to (|0⟩ + |1⟩)/√2

2. Pauli-X Gate (X)

- Quantum equivalent of classical NOT

- Flips |0⟩ to |1⟩ and vice versa

3. Pauli-Z Gate (Z)

- Adds a phase

- |0⟩ remains |0⟩, but |1⟩ becomes -|1⟩

4. Phase Gate (S)

- Adds 90-degree phase shift to |1⟩

5. T Gate

- Adds 45-degree phase shift

Multi-Qubit Gates

1. CNOT Gate (Controlled-NOT)

- Acts on two qubits: control and target

- If control is |1⟩, flips the target

- Essential for creating entanglement

2. SWAP Gate

- Exchanges states of two qubits

- Can be constructed from CNOT gates

3. Toffoli Gate (Controlled-Controlled-NOT)

- Three-qubit gate used in quantum algorithms

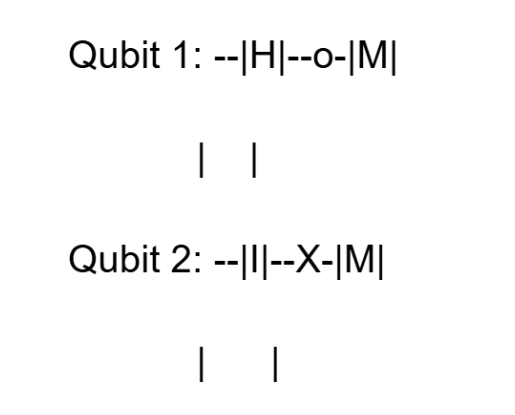

Quantum Circuits

A quantum circuit is a sequence of quantum gates operating on qubits:

Where:

- |H| = Hadamard gate

- |I| = Identity (no operation)

- o-X = CNOT gate (o is control, X is target)

- |M| = Measurement

Popular Quantum Algorithms

1. Grover's Search Algorithm

- Searches unsorted databases quadratically faster

- √N speedup vs. classical N speedup

2. Shor's Algorithm

- Factors are large numbers exponentially faster

- Threatens RSA encryption (breaking 2048-bit RSA in hours)

3. Quantum Approximate Optimisation Algorithm (QAOA)

- Solves optimisation problems

- Used for portfolio optimisation, traffic flow, and logistics

4. Variational Quantum Eigensolver (VQE)

- Finds the ground state energy of molecules

- Currently, the most deployed quantum algorithm

Real-World Applications

1. Drug Discovery and Healthcare

Current Status: We've successfully tested our initial ideas, and now real-world trials are in progress.

How Quantum Computing Helps:

- It can create detailed models of molecules and how they interact with drugs.

- It helps scientists understand how proteins fold and function.

- It identifies the best drug candidates by analysing them at a very fundamental level.

- It speeds up simulations for clinical trials, making the process quicker.

Companies Involved:

- IBM is working with a research institute called RIKEN to use advanced quantum technology for simulating complex molecules.

- JPMorgan Chase is exploring quantum solutions to improve healthcare and drug development.

Potential Impact: This technology has the potential to cut down the time it takes to develop new drugs from over 10 years to just 2-3 years, which could save billions of dollars in research and development costs.

2. Financial Services

Current Status: Ongoing pilot programs and research

Applications:

- Investment Management: Helping manage $2 trillion in assets more effectively.

- Risk Assessment: Analyzing financial risks and creating important metrics more quickly.

- Fraud Prevention: Identifying unusual patterns in transaction data to combat fraud.

- Secure Transactions: Using advanced methods for safe communication and data protection.

Companies Involved:

- JPMorgan Chase

- Goldman Sachs

- Deutsche Bank

- Other financial institutions looking to harness advanced technology by 2025-2030.

Business Value: An estimated market opportunity worth $2 trillion by 2035.

3. Logistics and Supply Chain Optimization

Current Status: Pilot programs are producing positive results.

Applications:

- Route Optimization: Finding the best paths for deliveries, which helps cut down on travel distances and saves fuel.

- Inventory Management: Using advanced technology to better predict how much stock will be needed.

- Scheduling: Making complex schedules simpler and more efficient, taking many factors into account.

Real-World Example:

Volkswagen has started a pilot program in Lisbon aimed at improving traffic patterns. This initiative has successfully reduced traffic jams and the time vehicles spend waiting. It shows how new computing technology can help tackle everyday logistical challenges.

Potential Impact: Companies believe they could improve their logistics operations by 10-30% in terms of efficiency.

4. Artificial Intelligence and Machine Learning

Current Status: We're just starting to integrate new technologies.

Quantum Machine Learning Applications:

- Image Recognition: Using advanced quantum techniques to help computers identify patterns in images.

- Clustering: A new approach to grouping data, similar to sorting items into categories, but using quantum methods to improve efficiency.

- Feature Mapping: This involves translating traditional data into a format that quantum computers can understand.

- Support Vector Machines: Speeding up regular algorithms that help classify data by using quantum technology.

Frameworks Being Developed:

- TorchQC: A new tool designed to help scientists develop deep learning applications that use quantum computing.

- Hybrid Models: Combining regular computing with quantum computing to take advantage of the best features of both.

Timeline: We expect to see major breakthroughs in this field after 2030.

5. Materials Science and Nanotechnology

Current Status: Emerging research area; breakthroughs in 2024-2025

Applications:

- Molecular dynamics simulation: Understanding material behaviour at the quantum level

- Catalyst design: Optimising chemical reactions for industrial processes

- Battery development: Improving energy storage materials

- Superconductor research: Understanding Cooper pairs and new materials

Recent Achievement:

- First verifiable quantum advantage for molecular simulation (Google's Quantum Echoes, 2025)

- Studied molecules with 15 and 28 atoms with precision unavailable from classical methods

Impact: Could accelerate materials development for renewable energy, carbon capture, and advanced electronics

6. Cybersecurity

Current Status: Immediate and long-term threats/opportunities

Threats:

- Quantum computers can break current RSA encryption

- Timeline: 10-20 years estimated before breaking RSA

Solutions:

- Post-quantum cryptography standards being standardised

- Quantum key distribution (QKD) offers unconditional security

- JPMorgan researching quantum-resistant solutions

Companies Working on This:

- IBM, Microsoft, Google on post-quantum cryptography

- Quantum networking startups on QKD

7. Climate and Environmental Modeling

Current Status: Research phase

Applications:

- Weather forecasting: Analyzing atmospheric patterns with greater accuracy

- Climate modeling: Understanding long-term climate trends

- Renewable energy: Optimizing smart grid distribution

- Carbon capture: Designing materials to capture CO₂ efficiently

Quantum Advantage:

- Quantum algorithms like QAOA effectively solve complex optimization problems

- Can analyze vastly more variables than classical systems

Quantum Computing Hardware

The quantum computing field has entered the NISQ (Noisy Intermediate-Scale Quantum) era, with practical devices available for experimentation.

Major Quantum Hardware Providers

IBM

- IBM made the Quantum Heron processor with 133 qubits.

- Its goal is useful large-scale quantum computing.

- IBM worked with RIKEN to solve real molecular problems using quantum computers.

- Google created the Willow quantum chip.

- It showed 70+ logical qubits with good error control.

- Google proved strong error correction and achieved real quantum advantage.

- Its Quantum Echoes algorithm is much faster than classical computers.

Microsoft

- Microsoft uses topological qubits, which are very stable.

- It connected 12 logical qubits with very low errors.

- Microsoft focuses on long-term reliable quantum hardware.

IonQ

- IonQ uses trapped ion qubits.

- These qubits stay stable for a long time.

- IonQ is available on AWS and Azure cloud platforms.

Quantinuum

- Quantinuum launched the Helios quantum computer in 2025.

- It connected 12 logical qubits with very high accuracy.

- It is considered one of the most accurate commercial systems.

Rigetti Computing

- Rigetti improved fast error correction in 2024.

- It works well with classical and quantum systems together.

QuEra

- QuEra uses neutral atoms and Rydberg technology.

- Its systems can grow to hundreds of qubits.

- It mainly focuses on optimization problems.

Atom Computing

- Atom Computing is building large neutral atom systems.

- It aims to reach 1,000 or more qubits in the future.

Specialized Quantum Hardware

Application-Specific Designs:

- Bleximo: Full-stack superconducting systems for specific applications

- Qilimanjaro: Quantum ASICs for optimization problems

- QuiX: Photonic quantum computers for optimization and simulation

Key Hardware Metrics

Coherence Time: How long qubits maintain quantum states

- Improved dramatically: Google doubled coherence lifetime (2024)

Error Rate: Reduction of errors through better engineering and error correction

- Physical error rate: ~1% (historical) → improving toward <0.1%

- Logical error rate: Achieved below "threshold" for error correction (2024)

Number of Qubits: Raw qubit count

- Current: 70-133+ qubits

- Goal: 1000+ logical qubits for practical applications

Connectivity: How qubits can interact

- Recent Breakthrough: IBM demonstrated logical qubit entanglement with overlapping codes, overcoming connectivity limitations (2024)

Getting Started with Quantum Computing

Before learning quantum computing, you should have:

- Linear Algebra: Understanding of vectors, matrices, complex numbers

- Probability: Basic probability theory and statistics

- Programming: Proficiency in Python or similar language

Getting Access to Quantum Computers

Free Cloud Access:

- IBM Quantum Experience: Register at quantum-computing.ibm.com

- Access to 5+ real quantum processors

- Thousands of free circuit runs per month

- Amazon Braket: Free tier available on AWS

- Simulator access

- Limited real device access

- Azure Quantum: Microsoft cloud platform

- Multiple backends available

- Free credits for new users

Beginner-Friendly Projects

Project 1: Create a Bell State (5 minutes)

- Creates quantum entanglement between two qubits

- First step in understanding multi-qubit systems

Project 2: Implement Deutsch Algorithm (30 minutes)

- Demonstrates quantum advantage

- Solves a problem faster than classically possible

Project 3: Build a Quantum Random Number Generator (15 minutes)

- Uses superposition to generate truly random numbers

- Practical and educational

Project 4: Quantum Teleportation (1 hour)

- Transfers quantum state between qubits

- Demonstrates key quantum protocols

Career Paths in Quantum Computing

Quantum Engineer: Develops quantum hardware and systems

- Required: Physics or EE degree, programming skills

- Employers: IBM, Google, Microsoft, IonQ, QuEra

Quantum Software Developer: Creates quantum algorithms and applications

- Required: Computer science degree, linear algebra

- Employers: Tech companies, startups, national labs

Quantum Researcher: Advances fundamental quantum science

- Required: PhD in physics or computer science

- Employers: Universities, national labs (Brookhaven, Argonne, etc.)

Quantum Security Analyst: Addresses quantum computing threats and solutions

- Required: Cybersecurity background

- Employers: Government, financial institutions, defence

Conclusion

Quantum computing is the next big step in the world of computing. It has the potential to solve extremely complex problems in areas such as medicine, finance, and scientific research. Recent progress in 2024 and 2025 shows that quantum computers are steadily moving from theoretical ideas to real-world applications, including molecule simulation and improved error reduction techniques. Today, learners can even access quantum computers through cloud platforms, making hands-on learning easier than ever before. Students, working professionals, and entrepreneurs can all benefit from understanding this emerging technology. Through a well-structured quantum computing tutorial, beginners can learn the fundamentals, explore practical examples, and build a strong foundation. By starting early, using free online resources, and experimenting with small projects, anyone can prepare for the future. Quantum computing is expected to transform many industries and solve problems that classical computers cannot handle.